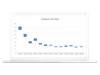

Put simply, the Violative View Rate (VVR) helps us determine what percentage of views on YouTube comes from content that violates our policies. Our teams started tracking this back in 2017, and across the company it’s the primary metric used to measure our responsibility work. As we’ve expanded our investment in people and technology, we’ve seen the VVR fall. The most recent VVR is at 0.16-0.18% which means that out of every 10,000 views on YouTube, 16-18 come from violative content. This is down by over 70% when compared to the same quarter of 2017, in large part thanks to our investments in machine learning. Going forward, we will update the VVR quarterly in our Community Guidelines Enforcement Report.

VVR data gives critical context around how we're protecting our community. Other metrics like the turnaround time to remove a violative video, are important. But they don't fully capture the actual impact of violative content on the viewer. For example, compare a violative video that got 100 views but stayed on our platform for more than 24 hours with content that reached thousands of views in the first few hours before removal. Which ultimately has more impact? We believe the VVR is the best way for us to understand how harmful content impacts viewers, and to identify where we need to make improvements.

We calculate VVR by taking a sample of videos on YouTube and sending it to our content reviewers who tell us which videos violate our policies and which do not. By sampling, we gain a more comprehensive view of the violative content we might not be catching with our systems. However, the VVR will fluctuate -- both up and down. For example, immediately after we update a policy, you might see this number temporarily go up as our systems ramp up to catch content that is newly classified as violative.